CORT: A New Baseline for Comparative Opinion Classification by Dual Prompts

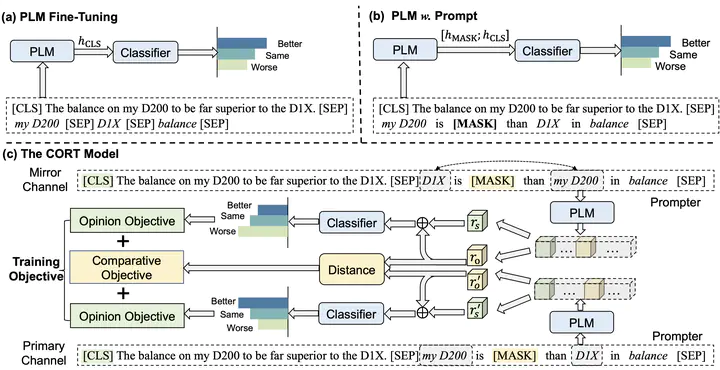

The architecture of CORT

The architecture of CORT

Abstract

Comparative opinion is a common linguistic phenomenon. The opinion is expressed by comparing multiple targets on a shared aspect, e.g., “camera A is better than camera B in picture quality”. Among the various subtasks in opinion mining, comparative opinion classification is relatively less studied. Current solutions use rules or classifiers to identify opinions, i.e., better, worse, or same, through feature engineering. Because the features are directly derived from the input sentence, these solutions are sensitive to the order of the targets mentioned in the sentence. For example, “camera A is better than camera B” means the same as “camera B is worse than camera A”; but the features of these two sentences are completely different. In this paper, we approach comparative opinion classification through prompt learning, taking the advantage of embedded knowledge in pre-trained language model. We design a twin framework with dual prompts, named CORT. This extremely simple model delivers state-of-the-art and robust performance on all benchmark datasets for comparative opinion classification. We believe CORT well serves as a new baseline for comparative opinion classification.