Yequan Wang (王业全)

Founder, Researcher

VoxInsight (Yanchuan Zhihui) by Spin Matrix

Beijing Academy of Artificial Intelligence

Peking University

Biography

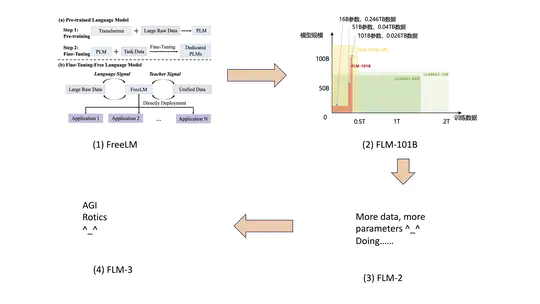

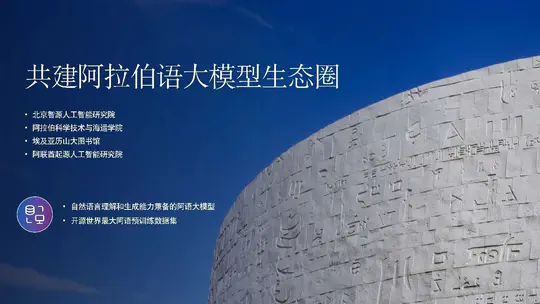

Dr. Wang Yequan is a researcher at Beijing Academy of Artificial Intelligence (BAAI) and Peking University (PKU). His research interests mainly include large models. He leads the Recognition Team, aiming to build lower-cost but more powerful large models and conduct relevant large model research, eventually achieving the goal of AGI.

Dr. Wang received Ph.D. in Computer Science from Tsinghua University, supervised by Prof. Xiaoyan Zhu and Minlie Huang. From Sep. 2017 to Sep. 2018, Dr. Wang studied at Nanyang Technological University as a Joint Ph.D. Candidate, supervised by Associate Prof. Aixin Sun, who is also the Assistant Chair (Academic).

Dr. Wang has been recognized as the 2022 AI 2000 Most Influential Scholar Honorable Mention in Natural Language Processing.

From November 2022 to November 2025, serving as the PI for the ‘Next-Generation Artificial Intelligence’ National Key R&D Program.

Team Philosophy for Large Models:Both system capabilities and research capabilities are essential. Without system capabilities, it is not possible to develop large models. Without research capabilities, one can only follow in the footsteps of others. In the case where the leader in large model development chooses to close the source code, it becomes impossible to make further breakthroughs.

Google Scholar Citations: 4,000+

ORCID: 0000-0001-7530-6125

- Emboddied AI

- Large Model

- Natural Language Processing

-

PhD in Artificial Intelligence, 2014-2019

Tsinghua University, Beijing

-

Joint PhD Program, 2017-2018

Nanyang Technological University, Singapore

Projects

Selected Publications

Recent Publications

Accomplishments

Contact

- tshwangyequan at gmail dot com

- 3 Haidian St, Zhongguancun, Beijing, CN 100000

- 20th Floor, Block A, DH3 Building

- Zhihu